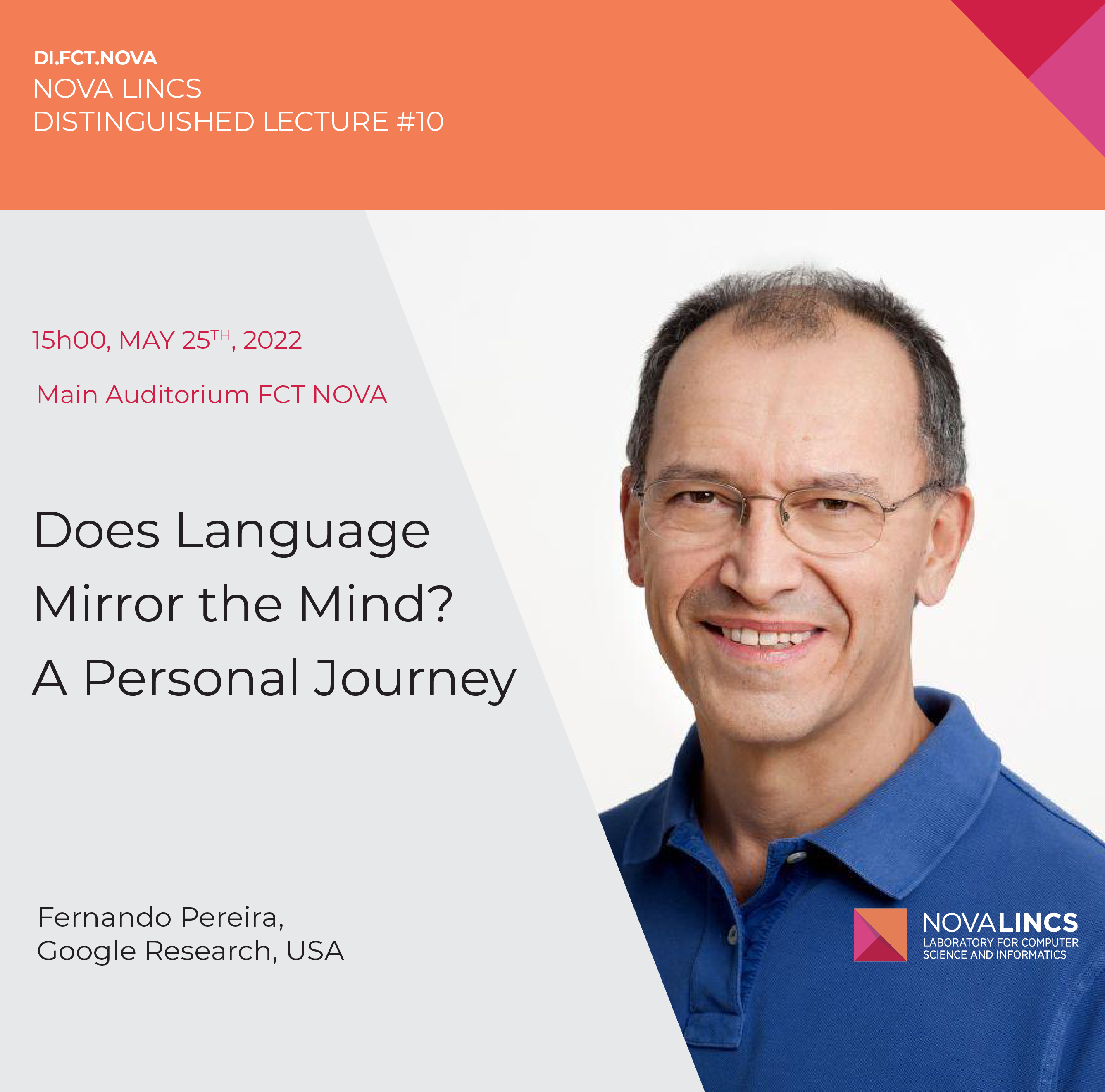

- DI FCT NOVA - NOVA LINCS DISTINGUISHED LECTURE #10

- May 2022

Abstract

Over 20 years ago, I wrote the following in an invited paper:Given the enormous conceptual and technical difficulties of building a comprehensive theory of grounded language processing, treating language as an autonomous system is very tempting. However, there is a weaker form of grounding that can be exploited more readily than physical grounding, namely grounding in a linguistic context. Following this path, sentences can be viewed as evidence for other sentences through inference, and the effectiveness of a language processor may be measured by its accuracy in deciding whether a sentence entails another, or whether an answer is appropriate for a question.

This path turned out to be even more productive than I imagined, though with different technical tools than the ones I favored then. So, is language-internal modeling all we need for creating language-using systems that pass the (Turing?) test? I will illustrate the question with a sequence of examples drawn from the work of many collaborators and colleagues, not answering it decisively, but still marveling at how so much of the contents and processes of our minds can be mechanically inferred from our constant chatter.

Bio

Fernando Pereira is Vice President and Engineering Fellow at Google, where he leads research and development in natural language understanding and machine learning.His previous positions include chair of the Computer and Information Science department of the University of Pennsylvania, head of the Machine Learning and Information Retrieval department at AT&T Labs, and research and management positions at SRI International.

He received a Ph.D. in Artificial Intelligence from the University of Edinburgh in 1982, and has over 120 research publications on computational linguistics, machine learning, bioinformatics, speech recognition, and logic programming, as well as several patents.

He was elected AAAI Fellow in 1991 for contributions to computational linguistics and logic programming, ACM Fellow in 2010 for contributions to machine learning models of natural language and biological sequences, and ACL Fellow for contributions to sequence modeling, finite-state methods, and dependency and deductive parsing. He was president of the Association for Computational Linguistics in 1993.

Distinguished Lectures details